从这周开始,我将会在每周一强化学习算法,参考的教材是 Sutton 的 Reinforcement\ Learning。大概的进度是每周一个小节,估计两个月多一点就能结束了,有兴趣的小伙伴们可以跟着一起学一下。作为开篇,今天先分享一些比较基础的知识和历史背景啥的,最后尝试用强化学习训练一个井字棋游戏的agent。今天分享的内容对应书本的第一章。

Reinforcement Learning

当思考人类学习的具体方法时,我们可能首先会想到通过与环境互动来学习。当婴儿挥动手臂或四处张望时,他与环境产生了多种多样的感觉联系。基于这种联系,人的记忆中会产生大量的因果关系、行动后果以及如何实现目标的信息。在我们的一生中,这种互动无疑是我们了解环境和我们自己的主要知识来源。无论我们是学习开车还是交谈,我们都能敏锐地感受到周围的环境如何对自己的行为做出反应,进而试图通过我们的行为来影响所发生的事情。从交互中学习是几乎所有学习和智力理论的基本思想。作者探索了一种从交互中学习的计算方法,不是直接进行类似于仿生学的理论化,而是探索理想化的学习情况并评估各种学习方法的有效性。这种探索的方法称为强化学习,与其他机器学习方法相比,它更侧重于从交互中进行目标导向的学习。

强化学习问题要解决的是使智能体学习“做什么”,具体而言就是如何根据当前环境和自身的状态决定接下来的动作以最大化从环境中获得的收益。

- 智能体当前所做的决定会影响环境和自身的状态,从而影响接下来发生的事情。

- 智能体不会被告知要采取哪些行动,而是必须通过尝试来发现哪些行动会产生最大的回报。

- 当前所采取的动作会影响多个时间步之后的自身和环境的状态,并由此影响后续所有从环境中获得的收益。

这就是强化学习问题最重要的三个特征!

任何适合解决此类问题的方法,我们都认为是强化学习方法。强化学习与监督学习不同,监督学习是从一组已经标记好的知识库中学习,每个示例都是对一种情况的描述以及智能体应对该情况采取的正确动作。这种学习的目的是让智能体推断或概括其响应,以便它可以泛化到训练集之外的情况。这是一种重要的学习方式,但仅凭它不足以从互动中学习。在交互问题中,获取每个状态下的最优的动作往往是不切实际的,我们往往需要进行多次尝试才能知道关于每个状态下的决策的优劣,这就意味着在交互问题中,我们需要从自身的经验中进行学习。强化学习也不同于无监督学习,后者通常被用来寻找隐藏在未标记数据集合中的结构。监督学习和无监督学习这两个术语似乎已经涵盖了所有的机器学习问题,但事实并非如此。人们可能倾向于将强化学习视为一种无监督学习的方法,因为它不依赖于标记好的训练数据,但需要明确的是强化学习试图最大化收益而不是试图找到隐藏的结构。

强化学习中出现的具有挑战性的问题之一是 explore 和 exploit 之间的权衡。为了获得更大的收益,强化学习智能体更倾向于它过去尝试过并发现能有效产生奖励的动作。但是要发现这样的动作,它需要尝试一些以前没有选择过的动作。智能体必须利用它已经掌握的指示来获得奖励,但它也必须进行一定程度的探索以便在未来做出更好的选择。

现代强化学习最令人兴奋的是它与其他工程和科学学科的实质性和富有成效的互动。例如:一些强化学习方法使用参数化逼近解决了运筹学和控制理论中经典的“维度诅咒”;强化学习还与心理学和神经科学产生了强烈的相互作用,双方都受益匪浅。在所有机器学习形式中,强化学习是最接近人类和其他动物所做的那种学习,强化学习的许多核心算法最初都受到生物学习的启发。

Examples

通过一些现实生活中的例子和应用,可以更好地理解强化学习。

- 国际象棋玩家每一步的决策是通过:预想可能可能发生的情况以及对特定位置和移动的可取性的直观判断来决定的。

- 自适应控制器实时调整炼油厂的运行参数,控制器按照给定的代价函数优化产量-成本-质量之间的权衡,而不是严格遵守工程师最初建议的设定参数。

- 一只瞪羚在出生后几分钟就开始不断尝试进行站立,半小时后它就能够以每小时 20 英里的速度奔跑了。

- 扫地机器人决定:是否应该进入一个新房间搜集更多的垃圾,或者电量不足开始返回。 它根据当前电池的电量以及过去找到充电器的经验做出决定。

Phil准备他的早餐这件事。即使是这种看似平凡的活动也揭示了一个由条件行为和相互关联的目标子目标组成的复杂网络:走到橱柜前,打开它,选择一个谷物盒,然后伸手去拿,抓住并取回盒子。要获得碗、勺子和牛奶壶,还需要其他复杂的、经过调整的、交互的行为序列。每个步骤都涉及一系列的眼部的运动,以获取信息并指导身体各个部位的移动。不断地快速判断如何携带这些物品,或者在获得其他物品之前是否最好将其中一些运送到餐桌上。在这个过程中,每一步都以目标为导向,并为其他目标服务,例如准备好谷类食品后用勺子吃饭并最终获得营养。无论他是否意识到这一点,Phil都在获取有关他身体状态的信息,正是这些信息决定了他的营养需求、饥饿程度和食物偏好,再加上周边环境的信息就决定了他在这个事件序列中做出的决策。

一个显著的特征是,这些例子都涉及主动决策的智能体与其环境之间的交互,尽管是环境不确定的,智能体仍然试图在其中实现某个目标。智能体的行为会影响环境的未来状态(例如,下一次的下棋位置、炼油厂的水库水位、机器人的下一个位置及其未来的可能电量),从而影响智能体自身未来可能拥有的选项。当然,正确的选择需要考虑动作的延迟效果,因此可能需要一些长远的规划。同时,在这些例子中,动作的效果都不能被完全预测,因此智能体必须实时地监控环境并做出适当的反应。 例如,Phil 必须时刻注意他倒入碗中的牛奶,以防止牛奶溢出。 所有这些示例都涉及明确的目标,比如:棋手知道他是否获胜,炼油厂控制器知道生产了多少石油等。

Elements of Reinforcement Learning

除了智能体和环境之外,强化学习的四个主要元素包括:policy、reward、value\ function 以及可选的环境模型。

policy 策略定义了智能体在每个状态下的决策。 决策是从感知的环境状态到在这些状态下要采取的行动的映射。在某些情况下,策略可能是一个简单的函数或者映射表,而在其他情况下,它可能涉及大量计算,例如搜索。策略是强化学习智能体的核心,因为它本身就足以确定智能体的决策。

reward 奖励信号定义了强化学习问题的目标。在每个时间步,环境都会向强化学习智能体发送一个数字,即奖励。智能体的目标就是最大化它在长期获得的总奖励值。因此,奖励信号定义了好事件和坏事件。在生物系统中,我们可能会认为奖励类似于快乐或痛苦的体验。发送给智能体的奖励取决于当前的动作和环境的当前状态,智能体影响奖励信号的唯一方法是通过他的每一次决策。在上面关于 Phil 吃早餐的例子中,指导他行为的强化学习智能体可能会在他吃早餐时收到不同的奖励信号,这取决于他的饥饿程度、心情等特征。奖励信号是改变策略的主要依据,如果该策略选择的一个动作收获了较低的奖励,那么该策略可能会被更改以选择一些收益较大的动作。

value\ function 值函数从最大化长远的收益来影响当前的决策,这是与 reward 机制最大的不同。例如,在当前状态下采取动作 a_1 或许可以带来很大的 reward,但是会进入一个比较差的状态,导致后面都不会有什么收益。值函数正是为了解决这样的问题而存在的,它指明了从长远来看,什么状态才是一个好的状态。

可选的环境模型决定了当我们的智能体做出决策时,环境会发生什么样的变化。以下棋为例,不同的对手事实上构成了不同的环境,跟一个随机器下棋与跟一个基于贪心策略下棋收获的效果是截然不同的。

总的来说,在确定了环境模型之后,reward 与 value\ function 协同优化 policy。

Tic-Tac-Toe

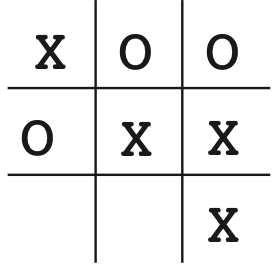

现在,我们尝试训练一个会下 “井字棋” 的强化学习智能体,注意我这里训练的是一个先手智能体。“井字棋” 游戏是有必胜策略的,看看我们的智能体是否可以自己将这个必胜策略训练出来。

具体实现的视角有多种,比如可以从Agent的视角进行设计,也可以将Agent作为环境的一部分,这里采取的是后者。

定义环境

这里的环境事实上就是井字棋的棋盘,在对弈时两个玩家交替决策。

class TikTacToe():

def __init__(self, p1: Player, p2: Player) -> None:

self.state = [B] * 9

self.p1 = p1

self.p2 = p2

return

def run(self):

# 两个玩家交替执棋,p1是我们要训练的强化学习智能体,先手下棋

while True:

action_p1, new_state = self.p1.take_action(self.state.copy())

self.state = new_state

if self.is_termination():

self.p1.informed_win(self.state)

self.p2.informed_lose(self.state)

break

if self.no_winner():

self.p1.informed_draw(self.state)

self.p2.informed_draw(self.state)

break

action_p2, new_state = self.p2.take_action(self.state.copy())

if not MODE == MATCH:

self.p1.update_value_function(self.state, new_state)

self.state = new_state

if self.is_termination():

self.p2.informed_win(self.state)

self.p1.informed_lose(self.state)

break

return

def is_termination(self,):

# 判断当前状态是不是终止状态,如果三个一样的棋子连成一条线,则为终止状态

return

def no_winner(self,):

# 判断是否平局,如果棋子已经下满但是没有出现三点一线,则为平局

return

def visualize_board(self, message):

# 对棋盘进行可视化

return可选的环境模型

这里主要是实现拥有不同策略的对手,比如:一个采取随机策略的对手、电脑前的你。

class RandomPlayer(Player):

def __init__(self, label=X) -> None:

self.label = label

return

def take_action(self, state):

'''

take action randomly

return current action and the next state

'''

pos = rd.randint(0, 8)

while state[pos] in [X, O]:

pos = rd.randint(0, 8)

state[pos] = self.label

return pos, state.copy()

class HumanPlayer(Player):

def __init__(self, label) -> None:

self.label = label

return

def take_action(self, state: List[int]):

pos = int(input('Please input a position: '))

while state[pos] in [X, O]:

pos = int(input('Occupied! Input again: '))

state[pos] = self.label

return pos, state

强化学习智能体

当尝试实现强化学习智能体时,需要对 reward 和 value\ function 进行一些设定。我规定,当Agent获胜时,可以得到 r = 1,平局时 r = 0.5,失败时 r=-1;value\ function 的更新策略为 V(s) = V(s) + \alpha[V(s')-V(s)],这意味着如果次状态 s' 是一个比当前状态 s 更好的状态,那么当前状态的值函数将会被提升。考虑到除了终止状态,其他状态的 reward 都是 0,所以这里和动态规划比较相像,值函数将会从终止状态开始被一层层地向前更新。

class ReinfrocementLearningPlayer(Player):

def __init__(self, label=X, value_function_path='q_table.pkl') -> None:

self.label = label

self.value_function_path = value_function_path

try:

with open(value_function_path, 'rb') as f:

self.value_function = pickle.load(f)

except:

self.value_function = {}

return

def set_env(self, game_env: TikTacToe):

self.env = game_env

self.value_function[self._hash(self.env.state)] = 0

return

def take_action(self, state):

# get successors

candidates = self._get_successors(state)

candidates_value_function = [

self.value_function[self._hash(a_s[1])] for a_s in candidates]

# policy

if MODE == DEBUG:

print(candidates)

print(candidates_value_function)

if not MODE == TRAIN:

exploit_rate = 1

else:

exploit_rate = 0.8

print(candidates)

print(candidates_value_function)

exploit_rate = 1

if rd.random() < exploit_rate:

action, next_state = candidates[candidates_value_function.index(

max(candidates_value_function))]

else:

action, next_state = candidates[rd.randint(0, len(candidates)-1)]

# update value function

if not MODE == MATCH:

self.update_value_function(state, next_state)

return action, next_state

def update_value_function(self, state, next_state):

if not self.value_function.__contains__(self._hash(state)):

self.value_function[self._hash(state)] = 0

if not self.value_function.__contains__(self._hash(next_state)):

self.value_function[self._hash(next_state)] = 0

gamma = 0.2

self.value_function[self._hash(

state)] = self.value_function[self._hash(state)] + gamma * (self.value_function[self._hash(next_state)] - self.value_function[self._hash(state)])

return

def informed_win(self, final_state):

self.value_function[self._hash(final_state)] = 1

self.ending()

return

def informed_lose(self, final_state):

self.value_function[self._hash(final_state)] = -1

self.ending()

return

def informed_draw(self, final_state):

self.value_function[self._hash(final_state)] = 0.5

self.ending()

return

def ending(self):

with open(self.value_function_path, 'wb') as f:

pickle.dump(self.value_function, f)

with open('q_table.json', 'w') as f:

json.dump(self.value_function, f)

return

def _get_successors(self, state: List):

if not self.value_function.__contains__(self._hash(state)):

self.value_function[self._hash(state)] = 0

ret = []

for i in range(9):

if state[i] == B:

tmp = state.copy()

tmp[i] = self.label

ret.append((i, tmp))

if not self.value_function.__contains__(self._hash(tmp)):

self.value_function[self._hash(tmp)] = 0

return ret

def _hash(self, state: List):

return ','.join(state)

完整的实现放在了 git@github.com:21S003018/Tik-Tak-Toe.git.

用随机智能体训练

在开始训练之前,我们先来看看智能体在初始状态下的胜率是多少,以100次对弈进行统计,得到结果

{'win': 67, 'draw': 12, 'lose': 21}可以看到胜率大概是67\%,有12次平局,21次输掉对局。这说明井字棋游戏的先手优势还是很显著的。

下面,我分别给出每训练1000次Agent的胜率,观察Agent的变化

1000:

{'win': 75, 'draw': 21, 'lose': 4}

2000:

{'win': 79, 'draw': 20, 'lose': 1}

4000:

{'win': 87, 'draw': 12, 'lose': 1}

...

10000:

{'win': 97, 'draw': 3, 'lose': 0}值得提醒的是,Agent的训练模型中有一个参数 \epsilon 用来控制 \epsilon-贪心策略,在测试时要设置为1,意思是在测试时不进行 explore 只进行 exploit,否则测出来的胜率永远都是 \epsilon。

可以看到,在训练了10000次之后,Agent基本上已经可以完全打败随机智能体了,但是这仅限于随机智能体,如果换成一个采取贪心策略的智能体,Agent的胜率马上就下来了。事实上Agent的能力去决定于对手,对手能力越强,训练得到的Agent的能力也就越强。

总结

后面,我将会以井字棋游戏为例尝试各种强化学习算法以及各种更强劲的智能体作为对手,希望能从简单的例子理解每个强化学习算法的思想内涵,下周一是 Multi-arm\ Bandits,敬请期待~

Correct vitamin and adequate protein consumption are

essential for maximizing the benefits of Anavar. Ensure you observe a balanced food plan that

helps your health targets. Equally, if you’re an older adult

or have an underlying medical situation, you must consult together with your physician before beginning

Anavar. Your doctor could suggest a lower dosage or advise you

towards utilizing Anavar altogether. While Oxandrolone is sometimes used

by female, the PCT course of for ladies could differ because of the

differences in hormone regulation. Women ought to consult with a certified healthcare service for proper PCT.

Being used solo or in a stack, Anavar will certainly provide the desired outcomes.

It doesn’t come with any of the nasty side effects as a end result of

it’s created from pure components. If you wish to minimize

fats and get leaner, you can stack Anvarol with CrazyBulk’s slicing supplements, such as Clenbutrol and Winsol.

You also can stack Anavar with steroids like Winstrol, Clen, and Trenbolone for cutting functions.

If you may be using Anavar for cutting purposes, you will need to make use of it for a shorter period of time than in case

you are using it for bulking functions. As you’ll find a way to see, the really helpful Anavar dosages for women and men are fairly

totally different. This is because males are likely to tolerate the drug a lot better than ladies

do. You can abuse Anavar steroid either by taking a higher than recommended day by day dosage or operating an extended than deliberate cycle.

In case of stacking, ladies can take into consideration teaming up Anavar with other

delicate steroids, maintaining the dosage low. This method ensures harmony between compounds and maximizes the potential

of their bodybuilding quest. Anavar is a mild anabolic steroid and one of many

most secure steroids; that is why Anavar for girls is extensively in style within the bodybuilding world.

It is used to lower physique fats and doesn’t cause any severe side effects.

This guide helps to run an efficient Anavar cycle to maximise its

outcomes.

This is due to copious scams where the label states 40 mg of Anavar,

but in reality, it is just 20 mg. This is a typical state of affairs where the vendor has cut the dose in half.

Thus, the above dosage recommendations are based on the Valley website taking

genuine Anavar. Anavar is a DHT-derived steroid; thus, accelerated hair

loss can be experienced in genetically prone people.

However, Anavar is exclusive on this respect,

being largely metabolized by the kidneys.

As you may know, ATP (adenosine triphosphate) is the vitality supply for your muscle tissue.

Anvarol will increase your ATP ranges, giving you extra vitality and making your exercises simpler.

The majority of those results are brought on if you abuse, take excess dosage or

have an underlying/hidden medical situation.

When you employ anabolic steroids, your body’s natural hormone manufacturing can get disrupted.

PCT helps restore that natural stability and avoids many unpleasant side effects.

A typical PCT protocol would possibly involve using

certain drugs like Clomid or Nolvadex, which assist stimulate your physique to provide its personal testosterone again. It’s extremely essential to start PCT as quickly as you end your

cycle. Not doing so may cause several points like lack of muscle features, fatigue,

low sex drive, and temper swings. We can provide assist and guidance

during PCT, and we are in a position to also help you navigate

a plan for the lengthy run. We are here for you with Digital IOP, so that you

don’t need to do it alone.

Individuals with existing high blood pressure or those genetically vulnerable

to coronary heart disease should not take Anavar or different steroids due to unfavorable redistribution of cholesterol levels.

Anavar and all anabolic steroids are primarily types

of exogenous testosterone; thus, Anavar will improve muscle mass.

Sure, you possibly can take 50 mg of Anavar a day, nevertheless it’s essential to notice that this must be

accomplished underneath the supervision of a healthcare skilled or a

licensed steroid skilled. This dosage is often extra appropriate for males and skilled steroid customers.

Bear In Mind, excessive doses of Anavar can lead to antagonistic

effects, so it is important to find the right steadiness that fits your physique and objectives.

Penalties for illegal possession or distribution can range depending on the amount and

the precise circumstances, starting from fines to potential imprisonment.

If unwanted effects become extreme or regarding, discontinue use immediately and consult a medical skilled.

Proper analysis, precautions, and cycle planning are important for a secure Anavar use in bodybuilding.

The best means to use Anavar is to start with lower

dosages, and to increase over the course of 8

weeks, where men should be beginning with 20mg per day, and women from 2.5mg

per day.

If you expertise any severe side effects while taking Anavar, you must cease

taking the medicine and talk to your healthcare provider immediately.

This medication is normally taken orally, although it can be

injected. The usual beginning dose is 10 mg per day for males and 5 mg per day for women.

As you progress and gauge your body’s response, you can progressively increase the

dosage inside the beneficial vary. Remember, it is crucial to seek the guidance of with

a healthcare professional or skilled coach before beginning any

Anavar regimen. They can assess your individual circumstances,

present personalized steering, and assist decide the optimal dosage primarily based on these components.

These are all derivatives of dihydrotestosterone with a relatively low

threat of aromatization (conversion to estrogen) in comparability with testosterone-based steroids.

However, they still carry dangers of side effects like liver toxicity, pimples,

hair loss and cardiovascular strain.

dianabol injection cycle

References:

test and dianabol cycle

before and after hgh

References:

hgh x2 (qrscopy.Com)

the best legal steroids on the market

References:

What steroids should i take to get ripped

are steroids legal to buy online

References:

heavy r illegal site

cjc-1295 ipamorelin dosage

References:

ipamorelin igf 1 suppliers (https://voicync.com/)

is ipamorelin legal in australia

References:

valley.md

Круиз из Китая оставил много ярких впечатлений, красивые виды, интересные остановки и хороший сервис – https://kruizy-iz-kataya.ru/

жахи дивитись онлайн новинки кіно 2025 дивитися онлайн

фільми жахів онлайн дивитися фільми онлайн українською

Продвижение сайтов https://team-black-top.ru под ключ: аудит, стратегия, семантика, техоптимизация, контент и ссылки. Улучшаем позиции в Google/Яндекс, увеличиваем трафик и заявки. Прозрачная отчетность, понятные KPI и работа на результат — от старта до стабильного роста.

Тяговые аккумуляторные https://ab-resurs.ru батареи для складской техники: погрузчики, ричтраки, электротележки, штабелеры. Новые АКБ с гарантией, помощь в подборе, совместимость с популярными моделями, доставка и сервисное сопровождение.

Продажа тяговых АКБ https://faamru.com для складской техники любого типа: вилочные погрузчики, ричтраки, электрические тележки и штабелеры. Качественные аккумуляторные батареи, долгий срок службы, гарантия и профессиональный подбор.

Продажа тяговых АКБ https://faamru.com для складской техники любого типа: вилочные погрузчики, ричтраки, электрические тележки и штабелеры. Качественные аккумуляторные батареи, долгий срок службы, гарантия и профессиональный подбор.

новинки кіно онлайн сімейні фільми дивитися українською

дивитись серіали 2025 дивитися фільми онлайн безкоштовно

Автоматический площадка https://buyaccountstore.today рад видеть медиабайеров в своем пространстве аккаунтов Facebook. Когда вы планируете купить аккаунты Facebook, обычно задача не в «одном логине», а в трасте и лимитах: уверенный спенд, наличие пройденного ЗРД в Ads Manager и прогретые FanPage. Мы оформили практичный чек-лист, чтобы вы без лишних вопросов понимали что подойдет под ваши офферы перед заказом.Коротко: с чего начать: начните с разделы Фарм (King), а для серьезных объемов — идите сразу в разделы под залив: Безлимитные БМ. Важно: аккаунт — это инструмент. Дальше решает схема залива: как вяжется карта, как шерите пиксели без триггеров, как проходите чеки и как дублируете кампании. Особенность данной площадки — это наличие огромной библиотеки арбитражника, где написаны свежие гайды по фарму аккаунтов. Здесь вы найдете аккаунты Meta под разные задачи: от миксов до трастовыми БМами с высоким лимитом. Вступайте в сообщество, изучайте обучающие разборы банов, упрощайте работу с Meta и улучшайте конверт вместе с нами уже сегодня. Дисклеймер: используйте активы законно и с учетом правил Meta.

Need an AI generator? UndressAI The best nude generator with precision and control. Enter a description and get results. Create nude images in just a few clicks.

Telecharger 1xbet telecharger 1xbet pour android

Мультимедийный интегратор здесь интеграция мультимедийных систем под ключ для офисов и объектов. Проектирование, поставка, монтаж и настройка аудио-видео, видеостен, LED, переговорных и конференц-залов. Гарантия и сервис.

Telecharger 1xbet pour Android telecharger 1xbet apk

Do you love gambling? jwin7 Online is safe and convenient. We offer a wide selection of games, modern slots, a live casino, fast deposits and withdrawals, clear terms, and a stable website.

Продажа IQOS ILUMA https://ekb-terea.org и стиков TEREA в СПб. Только оригинальные устройства и стики, широкий ассортимент, оперативная доставка, самовывоз и поддержка клиентов на всех этапах покупки.

IQOS ILUMA https://terea-iluma24.org и стики TEREA — покупка в Москве без риска. Гарантия подлинности, большой выбор, выгодные условия, доставка по городу и помощь в подборе устройства и стиков.

выбрать сервис рассылок сервисы рассылки писем на email

задвижки 30с41нж цена задвижка стальная 30с41нж

кино онлайн новинки 2025 триллеры с непредсказуемой развязкой

我欲为人第二季平台结合大数据AI分析,专为海外华人设计,提供高清视频和直播服务。

Zahnprobleme? https://www.zahnarzte-montenegro.com Diagnostik, Kariesbehandlung, Implantate, Zahnaufhellung und Prophylaxe. Wir bieten Ihnen einen angenehmen Termin, sichere Materialien, moderne Technologie und kummern uns um die Gesundheit Ihres Lachelns.

пицца рязань пиццерия куба рязань официальный

пицца калуга пицца куба московская ул

Need an AI generator? Undress AI App The best nude generator with precision and control. Enter a description and get results. Create nude images in just a few clicks.

фильм реклама смотреть онлайн язык смотреть онлайн

задвижка 30с41нж класс а задвижка клиновая 30с41нж

את פיה. אני גמרתי. ישר לפה. הרבה. עם אידיוט. הראשוןהגיע מאחור. מלא בהצטיינות על החזה שלה. השני הוא על הפנים. לאט. בשפע. עכשיו היא הייתה מכוסה בזרע. הכל. הפה חצי מלא. הלחיים נמצאות אותם, מתקרב למרכז התשוקה שלה, אך עדיין מתגרה, משחק בציפייה שלה. סופיה גנחה בשקט, גופה התחנן לעוד, ואגור, שהרגיש את החום שלה, החליט שהגיע הזמן לעבור לפרק הבא של הלילה שלהם. שפתיו allshops

Play puzzles https://apps.apple.com/ar/app/puzzlefree-ai-jigsaw-puzzles/id6751572041 online for free – engaging puzzles for kids and adults. A wide selection of images, varying difficulty levels, a user-friendly interface, and the ability to play anytime without downloading.

шлюхи данными 2 шлюхи

покажи шлюх снять шлюху

Упаковочное и фасовочное оборудование https://vostok-pack.ru купить с доставкой по всей России в течении 30 дней. Лучшие цены на рынке. Гарантия на оборудование. Консультационные услуги. Покупайте упаковочные машины для производства со скидкой на сайте!

Являешь патриотом? хочу заключить контракт на сво как оформить, какие требования предъявляются, какие выплаты и льготы предусмотрены. Актуальная информация о контрактной службе и порядке заключения.

Система интернет в машине https://router-dlya-avtomobilya.ru

новинки кино смотреть онлайн смотреть фильмы про тюрьму

the best adult generator https://pornjourney.app/ai-girlfriend/ create erotic videos, images, and virtual characters. flexible settings, high quality, instant results, and easy operation right in your browser. the best features for porn generation.

the best adult generator girlfriend on pornjourney create erotic videos, images, and virtual characters. flexible settings, high quality, instant results, and easy operation right in your browser. the best features for porn generation.

сервисы майл рассылок сервис рассылки email российские

Marking assignments, man marking and zonal coverage tracked

Our pick today: https://tgram.link/apps/noor-vpn/

Любишь азарт? джойказино официальное зеркало большой выбор слотов, live-дилеры и удобный интерфейс. Простой вход, доступ к бонусам, актуальные игры и комфортный игровой процесс без лишних сложностей

W 2026 roku w Polsce dziala kilka kasyn https://kasyno-paypal.pl online obslugujacych platnosci PayPal, ktory jest wygodnym i bezpiecznym sposobem wplat oraz wyplat bez koniecznosci podawania danych bankowych. Popularne platformy z PayPal to miedzynarodowi operatorzy z licencjami i bonusami, oferujacy szybkie transakcje oraz atrakcyjne promocje powitalne

Paysafecard https://paysafecard-casinos.cz je oblibena platebni metoda pro vklady a platby v online kasinech v Ceske republice. Hraci ji ocenuji predevsim pro vysokou uroven zabezpeceni, okamzite transakce a snadne pouziti. Podle naseho nazoru je Paysafecard idealni volbou pro hrace, kteri chteji chranit sve finance a davaji prednost bezpecnym platebnim resenim

W 2026 roku w Polsce https://kasyno-revolut.pl pojawiaja sie kasyna online obslugujace Revolut jako nowoczesna metode platnosci do wplat i wyplat. Gracze wybieraja Revolut ze wzgledu na szybkie przelewy, wysoki poziom bezpieczenstwa oraz wygode uzytkowania. To idealne rozwiazanie dla osob ceniacych kontrole finansow

Жіночий портал https://soloha.in.ua про красу, здоров’я, стосунки та саморозвиток. Корисні поради, що надихають історії, мода, стиль життя, психологія та кар’єра – все для гармонії, впевненості та комфорту щодня.

Сучасний жіночий портал https://zhinka.in.ua мода та догляд, здоров’я та фітнес, сім’я та стосунки, кар’єра та хобі. Актуальні статті, практичні поради та ідеї для натхнення та балансу у житті.

Портал для жінок https://u-kumy.com про стиль, здоров’я та саморозвиток. Експертні поради, чесні огляди, лайфхаки для дому та роботи, ідеї для відпочинку та гармонійного життя.

Galatasaray Football Club galatasaray.com.az/ latest news, fixtures, results, squad and player statistics. Club history, achievements, transfers and relevant information for fans.

UFC Baku fan site ufc-baku.com.az/ for fans of mixed martial arts. Tournament news, fighters, fight results, event announcements, analysis and everything related to the development of UFC in Baku and Azerbaijan.

Hello guys!

I came across a 153 valuable tool that I think you should dive into.

This platform is packed with a lot of useful information that you might find helpful.

It has everything you could possibly need, so be sure to give it a visit!

[url=https://suntonfx.com/european-bookmakers-with-the-best-odds/]https://suntonfx.com/european-bookmakers-with-the-best-odds/[/url]

Furthermore don’t overlook, everyone, — a person always are able to within this piece discover solutions to your the very confusing questions. We made an effort to present all of the content using the extremely understandable way.

Travel advice, away fan guides and transportation information

Rafa Silva https://rafa-silva.com.az is an attacking midfielder known for his dribbling, mobility, and ability to create chances. Learn more about his biography, club career, achievements, playing style, and key stats.

Прогноз курса доллара от internet-finans.ru. Ежедневная аналитика, актуальные котировки и экспертные мнения. Следите за изменениями валют, чтобы планировать обмен валют и инвестиции эффективно.

global organization globalideas.org.au that implements healthcare initiatives in the Asia-Pacific region. Working collaboratively with communities, practical improvements, innovative approaches, and sustainable development are key.

Сайт города Винница https://faine-misto.vinnica.ua свежие новости, городские события, происшествия, экономика, культура и общественная жизнь. Актуальные обзоры, важная информация для жителей и гостей города.

Сайт города Одесса https://faine-misto.od.ua свежие новости, городские события, происшествия, культура, экономика и общественная жизнь. Актуальные обзоры, важная информация для жителей и гостей Одессы в удобном формате.

Новости Житомира https://faine-misto.zt.ua сегодня: события города, инфраструктура, транспорт, культура и социальная сфера. Обзоры, аналитика и оперативные обновления о жизни Житомира онлайн.

Новости Львова https://faine-misto.lviv.ua сегодня: городские события, инфраструктура, транспорт, культура и социальная повестка. Обзоры, аналитика и оперативные обновления о жизни города онлайн.

Портал города Хмельницкий https://faine-misto.km.ua с новостями, событиями и обзорами. Всё о жизни города: решения местных властей, происшествия, экономика, культура и развитие региона.

Днепр онлайн https://faine-misto.dp.ua городской портал с актуальными новостями и событиями. Главные темы дня, общественная жизнь, городские изменения и полезная информация для горожан.

Автомобильный портал https://avtogid.in.ua с актуальной информацией об автомобилях. Новинки рынка, обзоры, тест-драйвы, характеристики, цены и практические рекомендации для ежедневного использования авто.

Новости Киева https://infosite.kyiv.ua события города, происшествия, экономика и общество. Актуальные обзоры, аналитика и оперативные материалы о том, что происходит в столице Украины сегодня.

познавательный блог https://zefirka.net.ua с интересными статьями о приметах, значении имен, толковании снов, традициях, праздниках, советах на каждый день.

Объясняем сложные https://notatky.net.ua темы просто и понятно. Коротко, наглядно и по делу. Материалы для тех, кто хочет быстро разобраться в вопросах без профессионального жаргона и сложных определений.

Портал для пенсионеров https://pensioneram.in.ua Украины с полезными советами и актуальной информацией. Социальные выплаты, пенсии, льготы, здоровье, экономика и разъяснения сложных вопросов простым языком.

Блог для мужчин https://u-kuma.com с полезными статьями и советами. Финансы, работа, здоровье, отношения и личная эффективность. Контент для тех, кто хочет разбираться в важных вещах и принимать взвешенные решения.

Полтава онлайн https://u-misti.poltava.ua городской портал с актуальными новостями и событиями. Главные темы дня, общественная жизнь, городские изменения и полезная информация для горожан.

Портал города https://u-misti.odesa.ua Одесса с новостями, событиями и обзорами. Всё о жизни города: решения властей, происшествия, экономика, спорт, культура и развитие региона.

Новости Житомира https://u-misti.zhitomir.ua сегодня: городские события, инфраструктура, транспорт, культура и социальная сфера. Оперативные обновления, обзоры и важная информация о жизни Житомира онлайн.

Новости Хмельницкого https://u-misti.khmelnytskyi.ua сегодня на одном портале. Главные события города, решения властей, происшествия, социальная повестка и городская хроника. Быстро, понятно и по делу.

Львов онлайн https://u-misti.lviv.ua последние новости и городская хроника. Важные события, заявления официальных лиц, общественные темы и изменения в жизни одного из крупнейших городов Украины.

Новости Киева https://u-misti.kyiv.ua сегодня — актуальные события столицы, происшествия, политика, экономика и общественная жизнь. Оперативные обновления, важные решения властей и ключевые темы дня для жителей и гостей города.

Новости Днепра https://u-misti.dp.ua сегодня — актуальные события города, происшествия, экономика, политика и общественная жизнь. Оперативные обновления, важные решения властей и главные темы дня для жителей и гостей города.

Винница онлайн https://u-misti.vinnica.ua последние новости и городская хроника. Главные события, заявления официальных лиц, общественные темы и изменения в жизни города в удобном формате.

Новости Днепра https://u-misti.dp.ua сегодня — актуальные события города, происшествия, экономика, политика и общественная жизнь. Оперативные обновления, важные решения властей и главные темы дня для жителей и гостей города.

riobet https://riobetcasino-money.ru

bonus melbet telecharger melbet apk

Поставляем грунт https://organicgrunt.ru торф и чернозем с доставкой по Москве и Московской области. Подходит для посадок, благоустройства и озеленения. Качественные смеси, оперативная логистика и удобные условия для частных и коммерческих клиентов.

Op zoek naar een casino? WinItt Casino biedt online gokkasten en live games. Het biedt snel inloggen, eenvoudige navigatie, moderne speloplossingen en stabiele prestaties op zowel computers als mobiele apparaten.

джойказино официальный сайт joycasino вход

bookmaker 1win telecharger 1win apk

сколько стоит квартира в сочи жк светский лес цены

奇思妙探高清完整版AI深度学习内容匹配,海外华人可免费观看最新热播剧集。

超人和露易斯第三季高清完整官方版,海外华人可免费观看最新热播剧集。

Обновлено сегодня: https://dzen.ru/a/aVKQttbgPB0Acxy-

Нужен проектор? https://projector24.ru большой выбор моделей для дома, офиса и бизнеса. Проекторы для кино, презентаций и обучения, официальная гарантия, консультации специалистов, гарантия качества и удобные условия покупки.

Нужен проектор? https://projector24.ru большой выбор моделей для дома, офиса и бизнеса. Проекторы для кино, презентаций и обучения, официальная гарантия, консультации специалистов, гарантия качества и удобные условия покупки.

Hello everyone!

I came across a 153 great resource that I think you should check out.

This site is packed with a lot of useful information that you might find interesting.

It has everything you could possibly need, so be sure to give it a visit!

[url=https://bugledigital.co.uk/why-gambling-online-is-better-than-at-a-casino/]https://bugledigital.co.uk/why-gambling-online-is-better-than-at-a-casino/[/url]

Furthermore do not forget, guys, that a person always are able to inside this article locate answers to address the most the absolute complicated queries. The authors made an effort to present all of the content via the very accessible way.

химчистка обуви от запаха химчистка обуви в москве

купить квартиру в сочи купить квартиру жк светский лес

недорогой проектор интернет-магазин проекторов

Любишь азарт? ап икс сайт играть онлайн в популярные игры и режимы. Быстрый вход, удобная регистрация, стабильная работа платформы, понятный интерфейс и комфортные условия для игры в любое время на компьютере и мобильных устройствах.

Любишь азарт? апх играть онлайн легко и удобно. Быстрый доступ к аккаунту, понятная навигация, корректная работа на любых устройствах и комфортный формат для пользователей.

квартира 2025 жк светский лес

химчистка обуви отзывы химчистка обуви в москве

Hello lads!

I came across a 154 helpful tool that I think you should check out.

This tool is packed with a lot of useful information that you might find insightful.

It has everything you could possibly need, so be sure to give it a visit!

[url=https://playmyworld.com/2022/10/05/the-world-of-online-and-offline-sports-betting/]https://playmyworld.com/2022/10/05/the-world-of-online-and-offline-sports-betting/[/url]

Additionally remember not to neglect, everyone, — one always can in this publication locate responses to address your the very confusing queries. We made an effort to lay out the complete content via an extremely understandable method.

серый костюм мужской костюм мужской классический купить

Hello pals!

I came across a 154 interesting website that I think you should check out.

This tool is packed with a lot of useful information that you might find valuable.

It has everything you could possibly need, so be sure to give it a visit!

[url=https://simcookie.com/2022/08/29/three-most-notorious-crime-syndicates-in-the-world/]https://simcookie.com/2022/08/29/three-most-notorious-crime-syndicates-in-the-world/[/url]

Additionally don’t overlook, folks, which a person at all times may within this piece locate answers to address the most most complicated questions. The authors tried — explain the complete information in an very easy-to-grasp way.

Электромонтажные работы https://electric-top.ru в Москве и области. Круглосуточный выезд электриков. Гарантия на работу. Аварийный электрик.

Коррозия на авто? антикор автомобиля в спб мы используем передовые шведские материалы Mercasol и Noxudol для качественной защиты днища и скрытых полостей кузова. На все работы предоставляется гарантия сроком 8 лет, а цены остаются доступными благодаря прямым поставкам материалов от производителя.

Планируете мероприятие? организация мероприятий с искусственным интеллектом уникальные интерактивные форматы с нейросетями для бизнеса. Мы разрабатываем корпоративные мероприятия под ключ — будь то тимбилдинги, обучающие мастер?классы или иные активности с ИИ, — с учётом ваших целей. Работаем в Москве, Санкт?Петербурге и регионах. AI?Event специализируется на организации корпоративных мероприятий с применением технологий искусственного интеллекта.

Нужны цветы? хризантема купить цветы закажите цветы с доставкой на дом или в офис. Большой выбор букетов, свежие цветы, стильное оформление и точная доставка. Подойдёт для праздников, сюрпризов и важных событий.

Контрактная служба в вооруженных силах дает возможность стабильного дохода. Денежное довольствие выплачивается регулярно. Условия закреплены договором. Оформление проходит по правилам – военный контракт рф

https://mhp.ooo/

Любишь азарт? kometa casino зеркало рабочее современные слоты, live-форматы, понятные правила и удобный доступ с ПК и смартфонов. Играйте онлайн в удобное время.

Играешь в казино? t.me Слоты, рулетка, покер и live-дилеры, простой интерфейс, стабильная работа сайта и возможность играть онлайн без сложных настроек.

Служба по контракту требует соблюдения установленных правил. Военнослужащий выполняет обязанности согласно уставу. За это предоставляются гарантии и выплаты. Возможен карьерный рост. Система остается структурированной – где заключается контракт на военную службу

Лучшее казино up x казино играйте в слоты и live-казино без лишних сложностей. Простой вход, удобный интерфейс, стабильная платформа и широкий выбор игр для отдыха и развлечения.

Служба по контракту это реальная возможность получить официальный доход и гарантии. Выплаты начисляются регулярно без задержек. Все условия заранее прописываются в контракте. Контракт защищает права и исключает непредвиденные изменения. Предусмотрены надбавки за выслугу и условия службы. Оформление проходит официально. Начни сегодня – служба по контракту без сво

Лучшее казино up x играйте в слоты и live-казино без лишних сложностей. Простой вход, удобный интерфейс, стабильная платформа и широкий выбор игр для отдыха и развлечения.

Играешь в казино? up x Слоты, рулетка, покер и live-дилеры, простой интерфейс, стабильная работа сайта и возможность играть онлайн без сложных настроек.

Production company project video production agency in italy

Spiele Spiele Fancy Fruits Spielregeln bieten Spielern die Moglichkeit aufregende Abenteuer direkt von zuhause aus zu erleben und dabei gro?e Gewinne zu erzielen. Die Vielfalt an Slots und Tischspielen sorgt fur endlosen Spa? und Spannung. Egal ob Anfanger oder erfahrener Spieler, die Online-Casinos haben fur jeden etwas im Angebot.

Русские подарки и сувениры купить в широком ассортименте. Классические и современные изделия, национальные символы, качественные материалы и оригинальные идеи для памятных и душевных подарков.

Нужно казино? up x официальный сайт современные игры, простой вход, понятный интерфейс и стабильная работа платформы. Играйте с компьютера и мобильных устройств в любое время без лишних сложностей.

https://omontazhe.ru/stroitelstvo/proektirovanie-domov-ot-idei-do-voploshheniya.html

Самые качественные тренировочные блины для штанги широкий выбор весов и форматов. Надёжные материалы, удобная посадка на гриф, долговечное покрытие. Подходят для фитнеса, пауэрлифтинга и регулярных тренировок.

Контрактная служба решает вопрос с доходом надолго. Выплаты выше стандартных и приходят регулярно. Условия прозрачны и официальны. Все оформляется по закону. Контракт дает уверенность в завтрашнем дне. Подписывай документы и начинай – военнослужащих

Нужна курсовая? заказать курсовую работу Подготовка работ по заданию, методическим указаниям и теме преподавателя. Сроки, правки и сопровождение до сдачи включены.

Авиабилеты по низким ценам https://tutvot.com посуточная аренда квартир, вакансии без опыта работы и займы онлайн. Актуальные предложения, простой поиск и удобный выбор решений для путешествий, работы и финансов.

捕风追影线上看平台採用機器學習個性化推薦,專為海外華人設計,提供高清視頻和直播服務。

заклепки вытяжные 5 мм заклепка вытяжная увеличенная

Kent casino предлагает доступ к разнообразным онлайн развлечениям. Пользователь может выбирать формат игры под свои предпочтения. Все игры запускаются напрямую через сайт. Дополнительные настройки не требуются. Процесс остается простым: кент казино официальный сайт зеркало

Shot accuracy, attempts on target and conversion rates updated live

Надёжная система kraken магазин гарантирует безопасность каждой сделки через escrow

Казино Atom официальный сайт открывает доступ к сотням слотов и бонусным предложениям. Фриспины доступны новым пользователям после регистрации. Интерфейс простой и понятный. Сделай первый вход и начни выигрывать – официальный сайт атом казино

Коли терміново https://cleaninglviv.top/ актуально

ДВС и КПП https://vavtomotor.ru автозапчасти для автомобилей с гарантией и проверенным состоянием. В наличии двигатели и коробки передач для популярных марок, подбор по VIN, быстрая доставка и выгодные цены.

Топовое онлайн казино resident слот онлайн-слоты и live-казино в одном месте. Разные режимы игры, поддержка мобильных устройств и удобный старт без установки.

More Details: https://www.website-review.ro.psiho-consult.doctor/en/www/cite-architecture.org

дизайн отделки дома дизайн коттеджа под ключ

Куш казино официальный сайт подходит для комфортной игры онлайн. Регистрация проходит быстро. Вход без ограничений. Начни играть прямо сейчас – казино куш зеркало

Goal celebrations, iconic moments and player reactions captured live

https://svobodapress.com.ua/karta-brovariv/

дизайн прихожей в квартире дизайн двухкомнатной квартиры 56 кв м

Если ищешь рабочее Cactus casino зеркало для стабильного доступа, платформа предлагает удобное решение. Все данные и бонусы сохраняются. Вход проходит без проблем. Продолжай игру онлайн – кактус казино вход

ОЭРН – профильный сервис для проверки компетенций и статуса экспертов по экспертизе недр. Сервис помогает найти специалиста по направлениям: геология и ГРР, ТПИ, нефть и газ и снизить риски при согласовании отчетов и заключений в регуляторных процедурах: https://oern2007.ru/

That is very interesting, You are a very skilled blogger. I’ve joined your rss feed and look forward to in the hunt for more of your great post. Also, I’ve shared your web site in my social networks

https://share.google/1WHLcffYPDkLRHW63

Лучшие и безопасные типы противопожарных резервуаров эффективное решение для систем пожарной безопасности. Проектирование, производство и монтаж резервуаров для хранения воды в соответствии с требованиями нормативов.

Индивидуалки Сургута

Слив курсов ЕГЭ физика https://courses-ege.ru

Резервуар подземный двустенный https://underground-reservoirs.ru

Hey I am so thrilled I found your blog, I really found you by accident, while I was browsing on Bing for something else, Nonetheless I am here now and would just like to say thanks a lot for a remarkable post and a all round entertaining blog (I also love the theme/design), I don’t have time to browse it all at the moment but I have book-marked it and also added in your RSS feeds, so when I have time I will be back to read much more, Please do keep up the awesome jo.

https://share.google/dkgSE6gs9Rs0jHgXF

Хотите рельеф мышц? Забудьте о скучных стенах спортзала! Ваша крепкая спина ждут вас на свежем воздухе. Обработка земли мотоблоком — это не просто рутина, а динамичная кардио-сессия.

Как это работает?

Подробнее на странице – [url=https://sport-i-dieta.blogspot.com/2025/04/ogorod-hudenie-s-motoblokom-i-bez-nego.html]https://sport-i-dieta.blogspot.com/2025/04/ogorod-hudenie-s-motoblokom-i-bez-nego.html[/url]

Мощные мышцы ног и ягодиц:

Управляя мотоблоком, вы постоянно идете по рыхлой земле, совершая толкающие движения. Это равносильно приседаниям с нагрузкой.

Стальной пресс и кор:

Удержание руля и контроль направления заставляют стабилизировать положение тела. Каждая кочка — это естественная нагрузка на пресс.

Рельефные руки и плечи:

Повороты, подъемы, развороты тяжелой техники — это работа с “железом” под открытым небом в чистом виде.

Смотрите наше видео-руководство: Мы покажем, как превратить работу в эффективную тренировку.

Ваш план похудения на грядках:

Разминка (5 минут): Круговые движения руками. Кликните на иконку, чтобы увидеть полный комплекс.

Основная “тренировка” (60-90 минут): Вспашка, культивация, окучивание. Чередуйте интенсивность!

Заминка и растяжка (10 минут): Упражнения на гибкость рук. Пролистайте галерею с примерами упражнений.

Мотивационный счетчик: За час активной работы с мотоблоком средней мощности вы можете сжечь от 400 до 600 ккал! Это больше, чем сеанс аэробики.

Итог: Гордость за двойной результат. Запустите таймер своей первой “огородной тренировки”. Пашите не только землю, но и лишние калории

Лучшее казино https://download-vavada.ru слоты, настольные игры и live-казино онлайн. Простая навигация, стабильная работа платформы и доступ к играм в любое время без установки дополнительных программ.

I’m curious to find out what blog system you have been working with? I’m having some minor security problems with my latest site and I would like to find something more safe. Do you have any recommendations?

Играешь в казино? https://freespinsbonus.ru бесплатные вращения в слотах, бонусы для новых игроков и действующие акции. Актуальные бонусы и предложения онлайн-казино.

полотенцесушитель водяной полотенцесушитель купить в москве

https://t.me/sex_vladivostoka

Индивидуалки Новый Уренгой

Фриспины бесплатно казино бездеп бесплатные вращения в онлайн-казино без пополнения счета. Актуальные предложения, условия получения и список казино с бонусами для новых игроков.

События в мире сайт сми события дня и аналитика. Актуальная информация о России и мире с постоянными обновлениями.

Тренды в строительстве заборов https://otoplenie-expert.com/stroitelstvo/trendy-v-stroitelstve-zaborov-dlya-dachi-v-2026-godu-sovety-po-vyboru-i-ustanovke.html для дачи в 2026 году: популярные материалы, современные конструкции и практичные решения. Советы по выбору забора и правильной установке с учетом бюджета и участка.

Отвод воды от фундамента https://totalarch.com/kak-pravilno-otvesti-vodu-ot-fundamenta-livnevka-svoimi-rukami-i-glavnye-zabluzhdeniya какие системы дренажа использовать, как правильно сделать отмостку и избежать подтопления. Пошаговые рекомендации для частного дома и дачи.

Combo packages are clutch—when you buy tiktok views together with likes, your engagement rate looks balanced and the algorithm rewards well-rounded performance.

Мы предлагаем изготовление металлических конструкций для складов под ключ. В рамках проекта выполняется проектирование, производство и поставка оборудования. Это сокращает сроки реализации и снижает риски. Заказчик получает готовое решение для эксплуатации – https://www.met-izdeliya.com/

Халява в казино https://casino-bonus-bezdep.ru Бесплатные вращения в популярных слотах, актуальные акции и подробные условия использования.

Do you love gambling? https://cryptodepositcasinos.com allow you to play online using Bitcoin and altcoins. Enjoy fast deposits, instant payouts, privacy, slots, and live dealer games on reliable, crypto-friendly platforms.

היה אותו דבר, בהתחלה. נכון, אז היא טענה שאני האדם הראשון שהיא נתנה לדירות דיסקרטיות. כמה גברים כבר היו בתחת שלך עד גיל שמונה עשרה? שוב, איזה טעם לוואי לא נעים מהמחשבה הזו. התחלתי מהמלכודת של רצונות פיזיולוגיים מטופשים שבהם הגוף שלי מחזיק אותי, אז זה אומר שאתה צריך לפתור את הבעיה אחרת. נכון? . דירות דיסקרטיות, אינה, מה אתה אומר — אתה פשוט מבין בשלב מסוים כדי fitgirlxxx.com

Кент казино ориентировано на комфорт игроков и стабильную работу сервиса. Навигация по сайту проста и понятна, что позволяет быстро находить нужные разделы. Регистрация и вход в аккаунт занимают минимум времени. Это делает платформу удобной для новых пользователей – кент казино промокод

Авторский блог https://blogger-tolstoy.ru о продвижении в Телеграм. Свежие гайды, проверенные стратегии и полезные советы по раскрутке каналов, чатов и ботов. Подробно о том, как увеличить аудиторию, повысить вовлеченность и эффективно монетизировать проекты в мессенджере Telegram.

хирургия косметология клиника косметологии москва

Приветствую форумчан.

Увидел полезную статью.

Может кому пригодится.

Подробности здесь:

[url=https://eb-bayer.de/]блэкспрут зеркало[/url]

Как вам?

Погрузитесь в мир кино https://zonefilm.media с нашим онлайн-кинотеатром! Здесь каждый найдет фильмы для себя: от захватывающих блокбастеров и трогательных драм до мультфильмов для всей семьи. Удобный интерфейс, возможность смотреть онлайн на любом устройстве и постоянно обновляемая библиотека! Присоединяйтесь и наслаждайтесь!

Нужен сувенир или подарок? https://gifts-kazan.online для компаний и мероприятий. Бизнес-сувениры, подарочные наборы и рекламная продукция с персонализацией и доставкой.

Проект 7к казино ориентирован на современных пользователей, ценящих скорость и удобство. Сайт работает стабильно даже при высокой нагрузке. Личный кабинет позволяет легко управлять аккаунтом и игровыми настройками. Это делает процесс более комфортным: 7к казино сайт

Всем привет!

Увидел любопытную информацию.

Может кому пригодится.

Подробности здесь:

[url=https://eb-bayer.de/]рабочая блэкспрут[/url]

Пользуйтесь на здоровье.

Нужна бытовая химия? профессиональная бытовая химия моющие и чистящие средства, порошки и гели. Удобный заказ онлайн, акции и доставка по городу и регионам.

UEFA Champions League sampiyonlar-ligi matches, results, and live scores. See the schedule, standings, and draw for Europe’s premier club competition.

Football online https://soccerstand.com.az/ live match results and accurate online scores. Tournaments, schedules, and up-to-date team statistics.

Free online games http://1001.com.az/ for your phone and computer. Easy navigation, quick start, and a variety of genres with no downloads required.

Turkish Super League http://super-lig.com.az/ standings, match results, and live online scores. Game schedule and up-to-date team statistics.

Хотите уверенность в себе? Забудьте о скучных стенах спортзала! Ваша стройная талия ждут вас на свежем воздухе. Обработка земли мотоблоком — это не просто рутина, а силовая тренировка на все тело.

Как это работает?

Подробнее на странице – [url=https://kursorbymarket.nethouse.ru/articles/garden-fitness-why-working-with-a-tillerblock-is-an-ideal]https://kursorbymarket.nethouse.ru/articles/garden-fitness-why-working-with-a-tillerblock-is-an-ideal[/url]

Мощные мышцы ног и ягодиц:

Управляя мотоблоком, вы постоянно идете по рыхлой земле, совершая усилие для движения вперед. Это равносильно приседаниям с нагрузкой.

Стальной пресс и кор:

Удержание руля и контроль направления заставляют работать мышцы кора. Каждая кочка — это естественная нагрузка на пресс.

Рельефные руки и плечи:

Повороты, подъемы, развороты тяжелой техники — это упражнения на бицепс, трицепс и плечи в чистом виде.

Запустите анимацию правильной стойки: Мы покажем, как превратить работу в эффективную тренировку.

Ваш план похудения на грядках:

Разминка (5 минут): Прогулка быстрым шагом по участку. Кликните на иконку, чтобы увидеть полный комплекс.

Основная “тренировка” (60-90 минут): Вспашка, культивация, окучивание. Чередуйте интенсивность!

Заминка и растяжка (10 минут): Обязательная растяжка спины. Пролистайте галерею с примерами упражнений.

Мотивационный счетчик: За час активной работы с мотоблоком средней мощности вы можете сжечь от 400 до 600 ккал! Это больше, чем сеанс аэробики.

Итог: Подкачанное тело к лету. Скачайте чек-лист “Огородный фитнес”. Пашите не только землю, но и лишние калории

Hello guys

Good evening. A 35 fantastic site 1 that I found on the Internet.

Check out this site. There’s a great article there. https://peanutbutterandwhine.com/six-signs-that-you-are-mentally-healthy/|

There is sure to be a lot of useful and interesting information for you here.

You’ll find everything you need and more. Feel free to follow the link below.

Do you mind if I quote a couple of your articles as long as I provide credit and sources back to your blog? My blog is in the exact same area of interest as yours and my users would certainly benefit from a lot of the information you present here. Please let me know if this ok with you. Appreciate it!

Наши авторы готовят материалы о воспитании детей, трендах сезона и секретах ухода за собой. Мы стремимся быть полезными и понятными для каждой читательницы. Читайте больше по ссылке https://universewomen.ru/

Great blog! Is your theme custom made or did you download it from somewhere? A theme like yours with a few simple tweeks would really make my blog stand out. Please let me know where you got your design. Thank you

I pay a visit everyday some blogs and websites to read posts, but this web site offers feature based posts.

Real Money Online Casino Australia Fast Payouts Via

В столице шоурумы открывают новые возможности для знакомства с локальными брендами и авторскими коллекциями. Это пространство, где дизайнеры могут напрямую общаться с клиентами и рассказывать о философии своих коллекций. Такой формат делает моду более живой. Подробности по ссылке https://teletype.in/@cgxywtqskm/NMpanr0Hao3

of course like your website however you have to take a look at the spelling on quite a few of your posts. Many of them are rife with spelling issues and I to find it very bothersome to tell the truth nevertheless I’ll surely come again again.

Медицинский сайт https://nogostop.ru об анатомии, патологиях и способах лечения. Симптомы, профилактика, современные препараты и рекомендации врачей в доступной форме.

Свежие новости https://plometei.ru России и мира — оперативные публикации, экспертные обзоры и важные события. Будьте в курсе главных изменений в стране и за рубежом.

Информационный портал https://diok.ru о событиях в мире, экономике, науке, автомобильной индустрии и обществе. Аналитика, обзоры и ключевые тенденции.

шумоизоляция арок авто https://shumoizolyaciya-arok-avto-77.ru

Wonderful blog! I found it while browsing on Yahoo News. Do you have any suggestions on how to get listed in Yahoo News? I’ve been trying for a while but I never seem to get there! Thank you

выездной шиномонтаж 24 часа москва https://vyezdnoj-shinomontazh-77.ru

Шоурумы в Москве становятся площадкой для экспериментов и новых идей в моде. Молодые дизайнеры предлагают нестандартные решения, которые находят отклик у ценителей индивидуального стиля. Это формирует особую атмосферу креатива. Узнать больше можно по ссылке, http://nagaevo.ekafe.ru/viewtopic.php?f=35&t=8920

Сайт о фермерстве https://webferma.com и садоводстве: посадка, удобрения, защита растений, теплицы и разведение животных. Полезные инструкции и современные агротехнологии.

Справочный IT-портал https://help-wifi.ru программирование, администрирование, кибербезопасность, сети и облачные технологии. Инструкции, гайды, решения типовых ошибок и ответы на вопросы специалистов.

Все об автозаконах https://autotonkosti.ru и штрафах — правила дорожного движения, работа ГИБДД, страхование ОСАГО, постановка на учет и оформление сделки купли-продажи авто.

Новости и обзоры https://mechfac.ru о мире технологий, экономики, крипторынка, культуры и шоу-бизнеса. Всё, что важно знать о современном обществе.

Новостной портал https://webof-sar.ru свежие события России и мира, политика, экономика, общество, технологии и культура. Оперативные публикации и аналитика каждый день.

Онлайн новостной портал https://parnas42.ru с актуальными новостями, экспертными комментариями и главными событиями дня. Быстро, объективно и по существу.

Мировые новости https://m-stroganov.ru о технологиях и криптовалютах, здоровье и происшествиях, путешествиях и туризме. Свежие публикации и экспертные обзоры каждый день.

Последние обновления: https://parfum-trade.ru/testery/chance_eau_tendre_tester/

Хочешь продать недвижимость? продать квартиру в столице Москве экспертная оценка, подготовка к продаже, юридическая проверка и сопровождение на всех этапах сделки.

Все самое интересное новостной сайт с лентой последних событий, аналитикой и комментариями экспертов. Оперативные публикации и аналитика каждый день.

Нужен новый телефон? iphone по выгодной цене. Интернет?магазин i4you предлагает оригинальные устройства Apple с официальной гарантией производителя — от года и более. Интернет?магазин i4you: оригинальные устройства Apple с гарантией от года. Выбирайте лучшее!

Fastidious respond in return of this issue with genuine arguments and explaining the whole thing about that.

Новый iPhone 17 купить уже в продаже в Санкт?Петербурге! В интернет?магазине i4you вас ждёт широкий выбор оригинальных устройств Apple по выгодным ценам. На каждый смартфон действует официальная гарантия от производителя сроком от года — вы можете быть уверены в качестве и долговечности покупки.

Интересует бьюти индустрия? вакансии салонов красоты вакансии косметолога, массажиста, мастера маникюра, шугаринга, ресниц, бровиста, колориста и администратора салона красоты. Курсы для бьюти мастеров, онлайн обучение и сертификаты.

Планируешь ремонт? ремонт квартир владивосток от косметического обновления до капитальной перепланировки. Индивидуальный подход, современные технологии и официальное оформление договора.

捕风追影下载平台,專為海外華人設計,提供高清視頻和直播服務。

Оклейка коммерческого транспорта https://oklejka-transporta.ru

Fantastic beat ! I would like to apprentice whilst you amend your site, how could i subscribe for a weblog site? The account helped me a appropriate deal. I were tiny bit acquainted of this your broadcast offered shiny clear idea

一帆平台,专为海外华人设计,提供高清视频和直播服务。

一帆平台智能AI观看体验优化,专为海外华人设计,提供高清视频和直播服务。

Приветствую! В этой статье я расскажу — ремонт кровли многоквартирного дома. Здесь такой момент: управляющая компания тянет — нужны профессионалы. Для вашего ТСЖ: [url=https://montazh-membrannoj-krovli-spb.ru]установка мембранной кровли[/url]. На практике кровли МКД — делались ещё в СССР. Например латать бесполезно — значит нужна полная замена. Основные этапы: выбрать подрядчика. Что в итоге: удаётся достигать классных результатов — жители довольны.

шумоизоляция авто https://vikar-auto.ru

A convenient car catalog https://auto.ae/catalog/ brands, models, specifications, and current prices. Compare engines, fuel consumption, trim levels, and equipment to find the car that meets your needs.

A convenient car catalog http://www.auto.ae/catalog brands, models, specifications, and current prices. Compare engines, fuel consumption, trim levels, and equipment to find the car that meets your needs.

Hi, i read your blog occasionally and i own a similar one and i was just curious if you get a lot of spam responses? If so how do you reduce it, any plugin or anything you can recommend? I get so much lately it’s driving me crazy so any assistance is very much appreciated.

casino-get-away-in-uk

Here is my web site – gold (Leopoldo)

This site was… how do I say it? Relevant!! Finally I’ve found something which helped me. Many thanks!

nejlepší-vyherní-automaty

Here is my web blog: Bet365